The 17th annual edition of the Flash Memory Summit (FMS) was held at the Santa Clara Convention Center earlier this month with some 100 exhibitors and more than 3,000 registered attendees. FMS showcases the industry’s key applications, technologies, vendors, and innovative startups that are driving the multi-billion dollar high-speed memory and SSD markets. Started in 2006, FMS features the trends, innovations, and influencers driving the adoption of flash memory and other high-speed memory technologies that support demanding enterprise storage applications, high-performance computing, AI/ML, mobile and embedded systems from the edge to the core data center and the cloud.

The 17th annual edition of the Flash Memory Summit (FMS) was held at the Santa Clara Convention Center earlier this month with some 100 exhibitors and more than 3,000 registered attendees. FMS showcases the industry’s key applications, technologies, vendors, and innovative startups that are driving the multi-billion dollar high-speed memory and SSD markets. Started in 2006, FMS features the trends, innovations, and influencers driving the adoption of flash memory and other high-speed memory technologies that support demanding enterprise storage applications, high-performance computing, AI/ML, mobile and embedded systems from the edge to the core data center and the cloud.

In a Kioxia keynote presentation, the speaker stated that we are squarely in the “Big Data/Data-centric Era” which at first blush does not seem like news. After all, big data is nothing new, it’s a term that goes back decades. But the “Data-centric” part is really what’s trending now as the value of data is rapidly increasing with AI enhanced analytics that drive innovation in just about every industry from autonomous vehicles to drug discovery to visual effects in Media & Entertainment and much more. Accordingly, AI was all over the show and a hot topic. In one conversation I had with an attendee, she revealed that her company just added an “AI department” simply to help the company’s stock price! If the Data-centric Era is built on AI, AI is built on data, and the more data that can be analyzed the better the decision making. That means billions of files with heavy amounts of associated metadata. Plus lots of copies that need copious amounts of storage, from low latency hot storage to long term cold storage that is both cost-effective and eco-friendly.

So at FMS the issue is not about flash/SSDs vs. disk vs. tape, it’s about storage optimization. With the data deluge in this “Big Data/Data-centric Era”, concerns are mounting about getting the right data in the right place at the right time, and at the right cost and energy consumption profile. Accordingly, we did see more dedicated archival content at FMS 2023. At least eight companies were in attendance to speak on archival strategies including ChannelScience, FUJIFILM Recording Media, Furthur Market Research, IBM, Ovation Data, Pithos LLC, Quantum, and SoFi.

Talking Tape and Flape at FMS

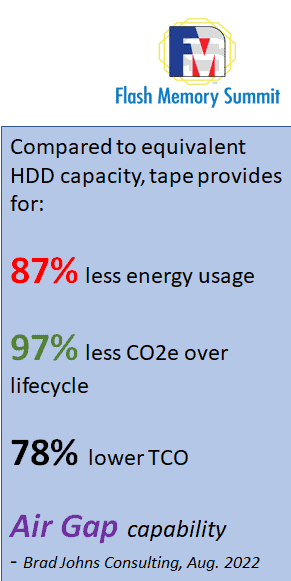

Talking Tape and Flape at FMSIn one speaker panel session that I had the opportunity of moderating, archival strategy was definitely the focus and I helped my industry colleagues make the case for today’s highly advanced and modern tape systems. In a nutshell, moving cold data to tape reduces energy use, CO2e, TCO and cyber threats. I made sure to emphasize to our audience that online and offline tape consumes no energy unless being read or written to by a tape drive, whereas HDDs are constantly spinning 24/7 consuming energy and generating heat.

That set the stage for IBM to talk about the concept of “Flape”, that is to say flash + tape in order to optimize storage performance and costs. Flash and tape systems are complementary and organizations can eliminate the middle tier of costly, energy intensive HDD and simply rely on a flash layer for its outstanding performance gains, micro latency, macro efficiency and enterprise reliability. And then use tape for best data streaming performance, unequaled cost-effectiveness, high capacity, scalability and low power consumption and footprint. Further making the case for tape, IBM made the point that there is an emerging big data science principle that says the more data history one has the better. Since we don’t necessarily know all the questions we might want to ask of our data in the future, it’s best to retain all the detailed history perpetually. That way we always have the flexibility to answer any new questions we might ever think of, and as a bonus gain visibility over an ever-larger data set as time goes by. Data sets retained over time and readily accessible in active archive environments provide insight to patterns that can be leveraged to drive better business decisions.

Leveraging the benefits of flash + tape is not a new concept, but rather a concept that appears to be benefiting from current market dynamics as the cost of flash comes closer to HDD and the value prop of tape (low cost, low energy, capacity/scalability, long archival life, air gap, etc.) becomes more relevant than ever.

Leveraging the benefits of flash + tape is not a new concept, but rather a concept that appears to be benefiting from current market dynamics as the cost of flash comes closer to HDD and the value prop of tape (low cost, low energy, capacity/scalability, long archival life, air gap, etc.) becomes more relevant than ever.

Flape is a concept originally coined by the analyst firm Wikibon in 2012. Wikibon described “flape” as a combination of flash and tape, with the potential to save IT departments as much as 300% of their overall IT budget over the course of 10 years when used for long-term archiving. The concept behind the flape architecture is to place the most active data as well as the metadata (the data about the data) on flash and the rest of the (cold) data on tape. The combination of flash and tape provides IT with the right balance of performance and cost for a number of use cases.

Wikibon advised CIOs and CTOs to seriously consider a flape architecture that helps meet the performance requirements of live, active data (best $/IOP) and the budget requirements for long-term data retention (best $/TB). Given the investments being made in flash/SSD, tape and HDD and the costs of each solution over time, a flape architecture makes sense, especially when looking at the manageability and environmentals (power, cooling, floor space) of the solution. Flape solutions can enable IT to turn unmanageable, infrequently accessed “big data” into a true business asset, argued Wikibon.

So while big data is nothing new, it’s still a challenge in this “Big Data/Data-centric Era” where the value of data has never been greater. Given today’s market dynamics, the timing is right to leverage tape to support primary flash storage. An IBM executive once said, “the best way to afford more flash is to deploy tape systems”. Make that a flape system!